Archive for the ‘web applications’ Category

- In: JavaScript | PHP | web applications

- 9 Comments

Being frustrated with the tinymce plugin for Expression Engine, I decided to create a rich text editor plugin for Expression Engine using the YUI library simple editor.

Due to a magic combination of:

- the awesomeness of the YUI library

- the thoroughness of the YUI documentation

- the simplicity of creating extensions for Expression Engine

it was surprisingly straightforward.

If you’re using Expression Engine and are either sick of fighting with tinymce or aren’t using a rich text editor, you can download it from http://code.google.com/p/ee-yui/.

Serf, Search API’s and secret sauce

Posted on: July 11, 2008

- In: API's | databases | semantic web | serf | web applications

- Leave a Comment

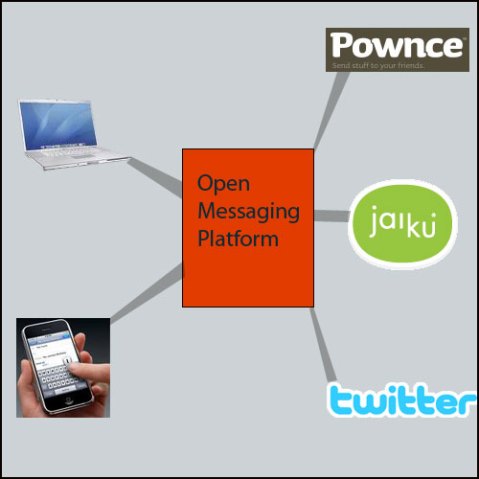

I’ve started building Serf – a personal project which I hope people will find useful. I’ve had plenty of ideas over the years but the idea behind Serf is something I find fascinating and feel compelled to pursue.

I’m going to keep it under wraps until it’s released (probably mid-October, if not sooner) but I can say it’ll involve hooking into some large API’s as well as some semantic web action. I’ll post notes as I can, as I’m sure I’ll be needing to solve some interesting problems along the way.

How do you get listed as having 97% uptime when your service was down for almost a full day in May?

97% sounds like a lot – pretty good in fact. Right?

The reality is that 1 day is around 3% of a calendar month. This means you can effectively take your service out for a full day each month and still claim 97% uptime (assuming no other outages during the month) .

Your customers, however, may not see it so positively…

wordpress as simple cms

Posted on: March 12, 2008

I’ve worked on a project where I used WordPress as a CMS for a (non-blog) content-driven site and was impressed by how flexible it was. I did cheat however and skirted around having to create an entire theme by creating simple PHP pages and pulling the data out using the post ID, as I thought WordPress wouldn’t function as well as a content site when using a theme.

Way wrong. I’m looking forward to using WordPress again, but properly using some of the ifnormation in the following links:

why do we need offline apps?

Posted on: March 12, 2008

ReadWriteWeb wrote up a while ago about Firefox 3 adding offline support for web applications. Arouind the same time, the Google Gears announcement came out and kind of fizzled. (Anyone using Google Gears? Anyone?)

I put this aside but recently discovered that companies are investing time and money into creating offline version of online web apps and using web app API’s to maintain data consistency. Take this offline front end for basecamp as an example.

Forgive me here, but isn’t connection to the internet becoming MORE pervasive every year. We now have not only desktop PC’s accessing and modifying information on the the internet, we have laptops, mobile phones, net-enabled devices and even ambient devices. The future is kind of banking on increased access to ‘the cloud’ in order for everything to tie together.

If today was 10 years ago, then this would make sense, but offline applications simply make no sense to me. If you feel the need for offline apps it seems you need to ask yourself why. If it’s a connection quality issue then it’s your connection which needs dealing with. If it’s commuting then surely there are far simpler tools you probably already have (text editor, word processor) which can perform the same basic functions until you’re online again.

If it’s about using the browser as platform, that’s been happening with Mozilla and Firefox for years via XUL and the Mozilla development engine products like Komodo use to get cross-platform applications functioning much easier.

The only reason I see for this development (especially in Firefox 3) is so Google can add simple offline support to it’s office suite in order to remove one more Enterprise excuse, but it still confounds me that time and energy is being sunk into offline support.

I’ll wait patiently to be educated..

The base documentation from Adobe is pretty good, but there are a couple of gotcha’s which aren’t immediately clear.

- You need to copy AIRAliases.js from

<air install dir>/frameworks

into your application directory (the same place you’ve placed you xml config file and sample html file.)

If you try to test the sample application without doing this, you’ll get an error. - My Windows setup is weird about PATH variables for some reason. If you get the standard ‘adl is not recognized as an internal command’ error, you can simply add the path to the adl executable into your command line query like so:

<air install dir>/bin/adl.exe HelloWorld-app.xml

- By default, when an application is packaged, the ADT process attempts to contact a time server to generate a timestamp. If you use a proxy server to connect to the internet, you’ll get a ‘connection refused’ error.

You can get around this by adding ‘-tsa none‘ before the file component of the command like so:adt -package -storetype pkcs12 -keystore sampleCert.pfx -tsa none HelloWorld.air

HelloWorld-app.xml HelloWorld.html AIRAliases.js

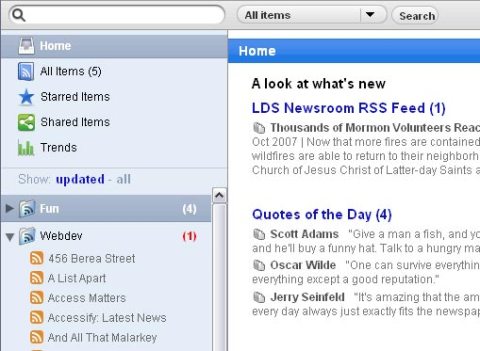

OK, that’s a bit rough – Google Reader isn’t that bad, and after the Newshutch fiasco I’ve needed to find another online RSS reader pronto.

Google Reader was OK, but this post from Derek Featherstone led me to a wee discovery to make my Google Reader-ing much more pleasant.

- Install the ‘stylish’ extension for Firefox

- Load this userstyle for Google Reader

- Bask in a much improved UI ‘inspired’ by OSX

Note: I’m not a Mac fanboy (I don’t even own an iPod forgoodnesssake) but there is no doubt that the OSX style UI is way, way nicer than Google’s offering. Just what is Doug Bowman doing over there anyway?

Note: I’m not a Mac fanboy (I don’t even own an iPod forgoodnesssake) but there is no doubt that the OSX style UI is way, way nicer than Google’s offering. Just what is Doug Bowman doing over there anyway?

recent comments